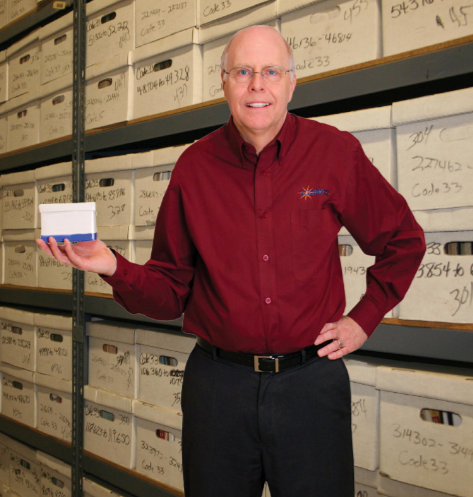

Consultant

Larry Phelps

Laserfiche Consultant

Laserfiche Consultant with over 20 years experience. My passion in life is to help people and organizations use technology to be more efficient and effective. If you are looking for ways to stand out from the pack, you have come to the right place.

My Passion

Helping organizations like yours increase Productivity by Going paperless

I have been in the computer technology field for over thirty years as a programmer, test engineer, software engineering manager, sales engineer, sales manager, and founder/owner of a computer technology company.

It has been my privilege to work on consulting projects for a variety of organizations and individuals. I have worked on projects for IBM, the U.S. Air Force, the IRS, Bell Labs, and Compaq Computers, as well as hundreds of small businesses, community action agencies, non-profits, attorney’s offices, cities, and other local government agencies.

It has been my privilege to work on consulting projects for a variety of organizations and individuals. I have worked on projects for IBM, the U.S. Air Force, the IRS, Bell Labs, and Compaq Computers, as well as hundreds of small businesses, community action agencies, non-profits, attorney’s offices, cities, and other local government agencies.

I have helped many small to mid-size organizations move their IT to Cloud Computing. Also, I have helped organizations streamline business processes using the Laserfiche enterprise content management system.

I work for a Laserfiche solution provider, Hemingway Solutions as Director of Sales and Marketing. https://www.hemingwaysolutions.net/

We help Community Action Agencies, Non-profits, and Small to Mid-size businesses go paperless and automate business processes.

Are You Frustrated?

Are you frustrated with your current Laserfiche support or lack thereof?

Productivity Improvement

Do you feel like you are not seeing the improvement you were hoping to see with your Laserfiche implementation? I have helped organizations like yours find simple solutions that help your staff become more efficient and effective.

Looking for a way for your clients can securely upload documents to you?

I have helped many organizations implement a portal that allows clients to safely upload documents and automate the processing of those documents without risking their security.

Industries

List of Industries

Community Action Partnership

Community Health

Insurance

City Government

County Government

Finance

Collection Agency

Child Care

Head Start

Manufacturing

Distribution

Waste Management

Education – School District

Title Insurance

Public Utility Company

Energy Services

Public Health

Small to Mid-Size Business

- AI and Machine Learning

- Upgrades and Migrations

- Application Approval Process

- Case Management

- AI Invoice Capture

- AP Approval Automation

- Dashboards and Analytics

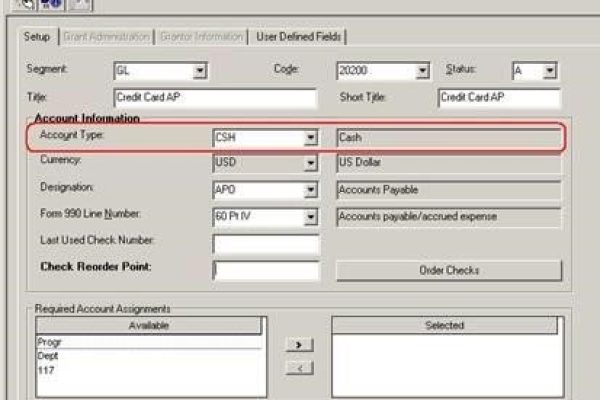

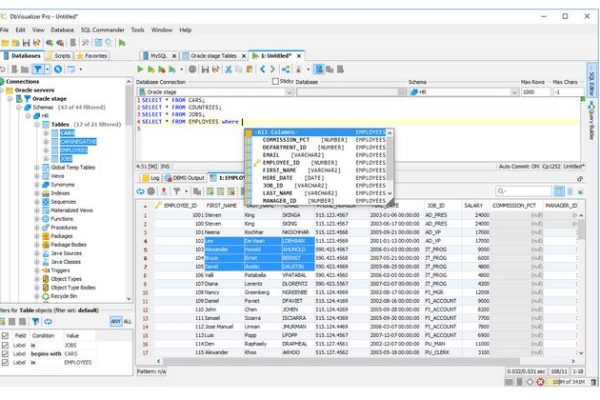

- ERP Integrations such as Abila, Great Plains Dynamics, Sage, and Tyler

- Insurance Application Automation

- Loan Application

- Expense Report Automation

- Contract Management

- Mail Room Automation

- Electronic Forms

- Remote Work

- Automated Document Filing

- Client File Upload

- Data Capture (AI-based)

Laserfiche Consulting by Department

- Case Management

- Energy Assistance

- Housing

- Accounting

- HR

- Public Works

- City Clerk

- Finance

- Collections

- IT

- Compliance

- Legal

- Administration

- Purchasing

- Engineering

Laserfiche Integrations

- AI API

- Microsoft Dynamics

- ESRI GIS

- Abila MIP

- Tyler

- People Soft

- SoftPak (IBM Series)

- Accountmate

- Sage Inntac

- NetSuite

- Great Plains Dynamics

- and many SQL applications

Laserfiche Consulting Projects We Have Done

- Minnesota Valley Community Action Partnership Energy Assistance Approval

- Community Action Partnership of Washington Ramsey – Energy Assistance Approval and Weatherization

- Community Health Centers – Invoice Capture

- City of Detroit Lakes – Permit Application Approval

- Viking Materials – AP Automation

- Aspen Waste – AP Automation

- Star Tribune – AP Automation and PeopleSoft Integrations

- Lakes and Pines Community Action Council – Energy Assistance

- City of Ramsey – GIS Integrations

- City of Shoreview – As-Builts

- Twin Rivers Unified School District – School Plan for Student Achievement (SPSA)

- Agquest/Harvestland – Loan Processing

- IC System – Mailroom Automation, Collection Process Automation

- Douglas County, Colorado – Forms Portal to multiple agencies

- Kids Quest – Student Saftey

- Northeast Residence – Client records

- Mahube-Otwa Community Action Partnership – Energy Assistance Approval

- Kootasca Community Action – Energy Assistance Approval

- Rice Creek Watershed – Application

- Coon Creek Watershed – GIS integration

- JT Miller – Insurance Documents

- Scott Carver Dakota Community Action – Energy Assistance Approval Process

- Wright County Community Action Agency – Energy Assistance

- Community Action Hennepin – Energy Assistance Application approval, Water approval process, Housing

Laserfiche Products

- Laserfiche RIO

- Laserfiche Avante

- Laserfiche Workflow

- Laserfiche Forms

- Laserfiche Cloud

- Laserfiche API

- Laserfiche Quickfields

testimonials

What My Clients Say

“I cannot even begin to express how elated I am with this product!!!!!!

Even when there are data elements that need to be fixed, the process is so painless and intuitive, and easy that it is absolutely a JOY to work in the system.

You have been so supportive and even suggesting fixes I would not have required. I get the feeling he is just as invested in getting us up and running as I am.

This has been the best software rollout experience I have ever had —- and I have launched a LOT of software programs (and written a few myself).

THANK YOU for all of your help and support!!!!!!!!”

Dee Bradshaw

Director of Purchasing

Community Health Centers, Inc

Community Health Centers, Inc

In the insurance industry, especially in the mid-west, many things are handled by a handshake. People can make promises, but it is the integrity of the individual that counts. For that reason, I chose Larry and his company to work with. And that hunch paid off, we have been working together for over 15 years.

Dirk Miller

JT Miller Insurance Co

“Larry and Hemingway Solutions been amazing. I’d recommend them to anyone.n

If you are on the fence, I just say go for it, you will be glad you did.”

Corrine Schmidt, Administrator

Northeast Residence

“They are a company you can be creative with. They work with us as if they are a part of our company.

The accounts payable workflow they created for us was comprehensive. and well done.

And it has worked for us for almost 10 years.

Thor Nelson

Aspen Waste Systems

“We were up and running on a workflow within a month. The workflow they created for us to mimic our paper flow, so it was easy for our staff to use.

By digitizing applications and automating the approval process with Laserfiche workflow, we have significantly improved crisis response time.

They did an amazing job working with our staff.”

Catherine Fair

Community Action Partnership Ramsey Washington

Larry and his Laserfiche team have helped us save hundreds of hours.

He helped us with the storage and retrieval of our as-builts.

I really appreciate everything they have helped me with over the past 10 years.

“We are in a highly regulated industry, so it is important to have secure and quick access to our important documents. And Laserfiche does this very well for us.

…they are very great to work with, their rapport with our administration staff has really been a winning solution for us”

David Arcand

New Horizon’s Kid Child Care and Academy

“We were very impressed with the amount of support we received from Larry and his team during our conversion that allowed us to make our implementation data.

Their experience with PeopleSoft in prior conversions meant they could give us all the help we needed to retrieve images from within PeopleSoft.”

Corey Kuester

Star Tribune